The Sky Is Not Falling: Why Every Generation Thinks Their Job Crisis Is The Last One

On AI, adaptation, and the very human habit of catastrophizing change

Let’s start with a confession: I find the AI panic fascinating. Not because the concern is stupid, it isn’t. But because it is so perfectly human. Every generation, without fail, has looked at the machine of its era and concluded that this time, finally, the economy has been broken beyond repair. This time, the jobs are actually gone. This time, we’ve crossed a threshold from which there is no return.

They were wrong every single time. And I want to make the case - - quite carefully, with receipts that the instinct is wrong again.

The Luddites Were Right About Everything Except the Ending

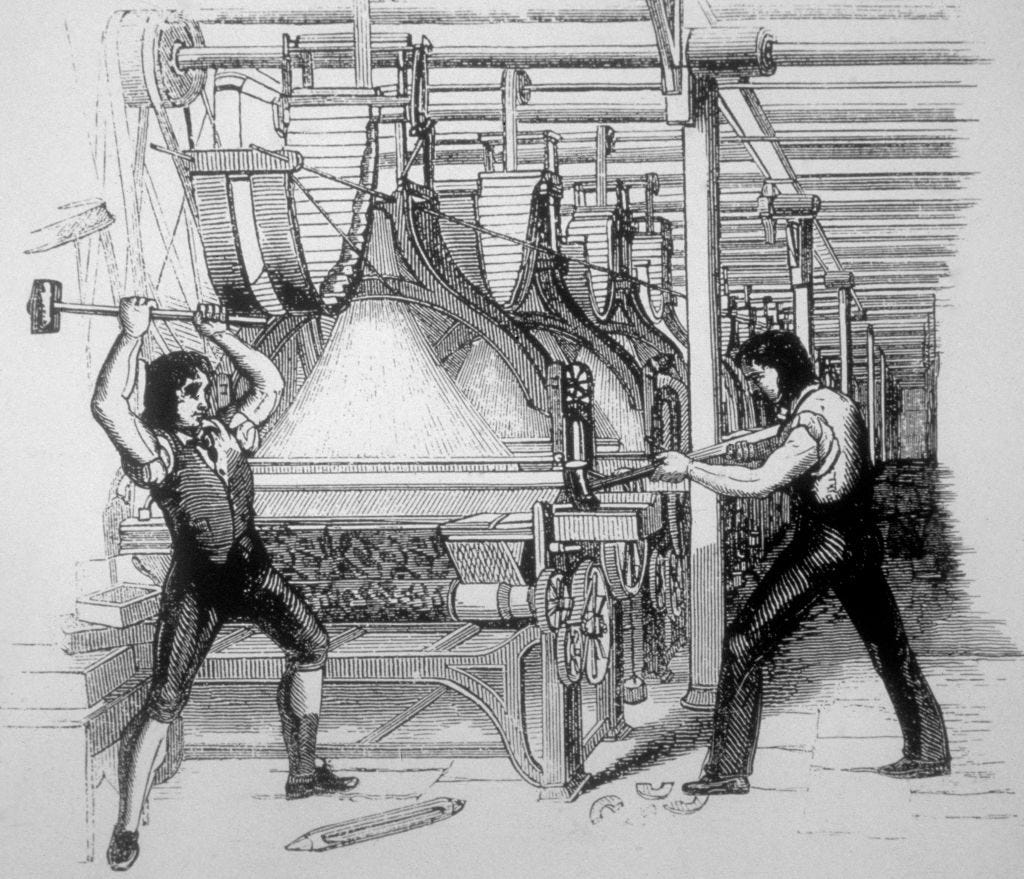

In 1811, skilled textile workers in Nottingham began smashing the mechanical looms that threatened their livelihoods. They weren’t stupid or irrational. The stocking frames and power looms did displace them. Their wages did fall in the short run. Their craft did become obsolete in the form they’d known it.

But here’s what didn’t happen - the total number of textile jobs didn’t collapse. By 1830, Britain’s textile industry employed more workers than before the looms, not fewer but just in different configurations, at different tasks, in factories rather than cottages. The economist David Ricardo, writing in 1821, was actually one of the first serious thinkers to entertain the possibility that machinery could cause permanent unemployment. He wasn’t entirely wrong about the short-term pain. But the long-term trajectory went the other direction entirely.

This is the pattern, repeated so consistently across two centuries that it almost gets boring - technology destroys specific tasks and specific job configurations, while simultaneously expanding the overall volume of economic activity, which creates new demand for labor, often in ways that nobody predicted.

The Spreadsheet That Was Going to End Accounting

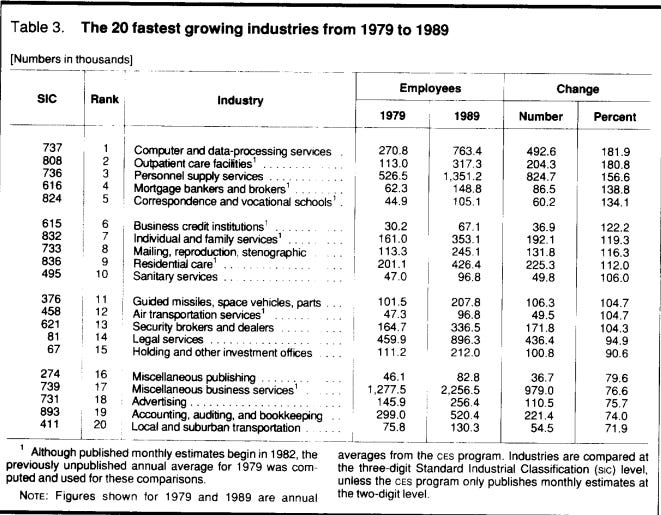

Let’s fast-forward to 1979, when VisiCalc which was the world’s first commercial spreadsheet landed on the Apple II. At the time, American companies employed roughly 400,000 bookkeepers and accounting clerks. The fear was immediate and understandable that discussed if a machine could do in seconds what a bookkeeper spent days doing, why would you keep the bookkeeper?

What actually happened is one of the most instructive case studies in labor economics. According to research by economist James Bessen, the number of accounting clerk positions did fall per firm after spreadsheets arrived. But the cost of doing accounting work dropped so dramatically that demand for accounting services exploded. Firms that couldn’t previously afford serious financial analysis suddenly could. New regulatory complexity created new compliance needs. The result? The total number of people employed in accounting-related work in the US increased significantly through the 1980s and 1990s. The Bureau of Labor Statistics data shows that between 1980 and 2000, employment in accounting and auditing grew by over 150%.

Did the spreadsheet end accounting ? obviously no. It ended the specific task of manual ledger maintenance and redirected accountants toward higher-order work covering analysis, forecasting, advisory services. The job changed. The profession survived and grew.

Every tool that was going to make your job obsolete ended up making your job bigger. Every single one. Until now, apparently. Sure.

ATMs and the Paradox of the Expanding Bank Teller

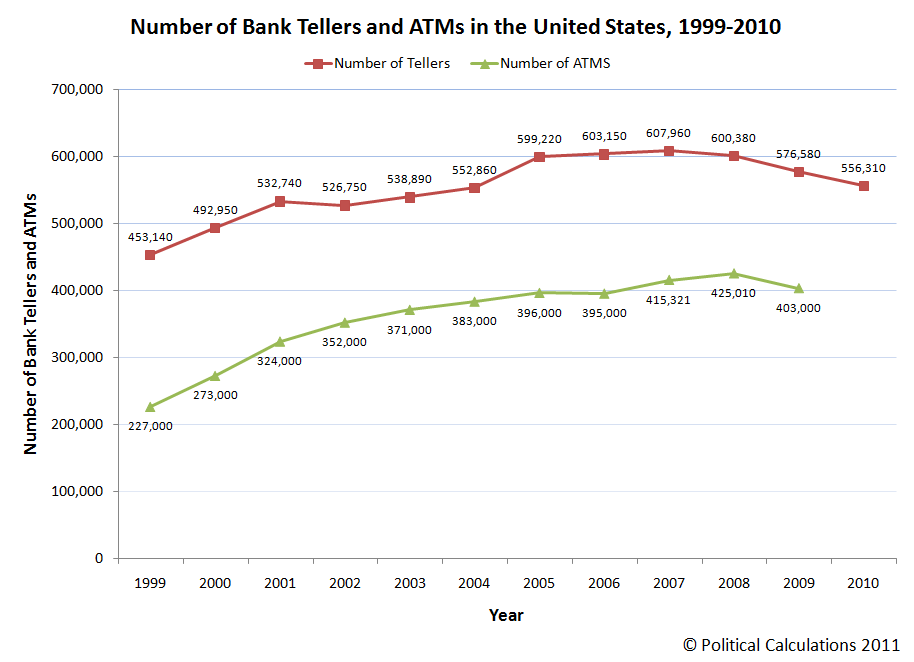

This one genuinely surprised economists when it was first studied seriously. The ATM was introduced commercially in the late 1960s and proliferated through the 1970s and 1980s. Surely, if any machine was going to eliminate a specific role comprehensively, an Automated Teller Machine eliminating tellers was the cleanest possible case.

Economist James Bessen, worth citing twice, his work here is essential. He found that between 1980 and 2010, while the number of ATMs in the US grew from essentially zero to around 400,000, the number of human bank tellers also increased, from approximately 300,000 to over 550,000.

How? Two mechanisms worked simultaneously. First, ATMs reduced the cost of running a bank branch, which meant banks opened more branches. Second, tellers were freed from the repetitive task of dispensing cash and redirected toward relationship-based work that covered loan conversations, financial product recommendations, customer issue resolution. The job changed substantially. The headcount too, grew.

This is not a cherry-picked example. Bessen’s broader research across dozens of occupations found that technological automation of specific tasks has very rarely led to the elimination of entire occupations in the way predicted. It has instead consistently led to task recomposition where the human’s role shifts toward what the machine cannot yet replicate.

But Wait — This Time Really Is Different. Isn't It?

Here’s where I want to be genuinely fair to the anxiety, because dismissing it entirely would be intellectually dishonest.

The argument for “this time is different” with AI goes something like this: previous technologies automated physical or narrow cognitive tasks. The loom replaced hand-weaving. The spreadsheet replaced arithmetic. The ATM replaced cash dispensing. These were specific, bounded operations. Large language models and generative AI, by contrast, appear to be automating something closer to general cognition - - reading, writing, reasoning, synthesis, code generation, image creation. If the thing being automated is cognition itself, not just a single cognitive task, then the usual conventional (or not so) wisdom might not apply.

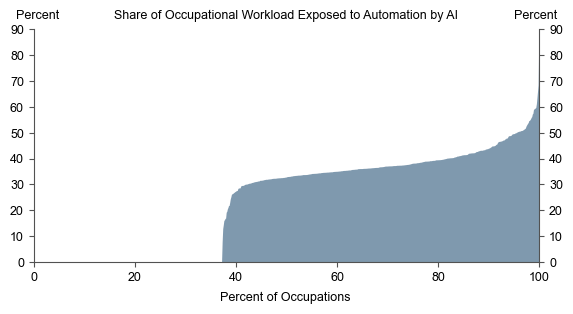

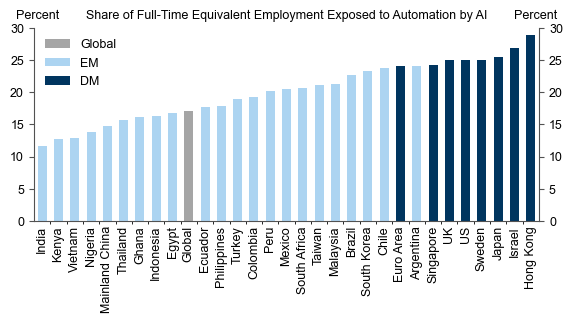

This is a serious argument, and economists too are genuinely divided on it. A widely cited 2023 Goldman Sachs report (The Potentially Large Effects of Artificial Intelligence on Economic Growth) estimated that generative AI could expose roughly 300 million full-time jobs globally to automation or significant disruption. An MIT and Boston University study from 2020, examining the impact of robots in manufacturing, found more localized but real displacement effects in specific communities, with weaker reabsorption than historical patterns suggested.

So, I wouldn’t call the concern fabricated. The question, in my honest opinion, is whether "exposure to disruption" is the same as "elimination," and history suggests very strongly that it isn't.

AI is not the first thing that was supposed to make you irrelevant. It's just the first one that could write a paragraph about it.

The Great Readjustment, Not the Great Elimination

Here’s the frame I find most useful - what we are living through is a Great Readjustment, not an elimination event. The tasks that constitute any given job are being reshuffled, not deleted. And this is happening across every sector simultaneously, which is what makes it feel more total and terrifying than previous transitions. But trust me simultaneity doesn’t mean finality.

Consider what’s actually happening sector by sector:

In law, AI tools like Harvey and CoCounsel are handling document review, contract analysis, and research summarization at extraordinary speed. This is genuinely disrupting junior associate work at large firms. But legal judgment —of the adversarial reasoning, the courtroom dynamics, the client relationship management, the ethical complexity remains deeply human. What’s changing is the leverage ratio where a senior attorney can now do more with fewer junior associates, but the demand for legal services is also expanding as AI lowers the cost of legal access for individuals and smaller businesses who previously couldn’t afford it.

In medicine, AI diagnostics are matching or exceeding radiologists at specific imaging tasks. A 2020 Nature Medicine study (McKinney et al., "International evaluation of an AI system for breast cancer screening," 2020) showed AI performing comparably to expert radiologists in breast cancer detection. This sounds like an elimination story. But radiologists in well-resourced systems aren’t disappearing but they’re being repositioned toward complex, ambiguous cases, toward communicating with patients, toward integrating multi-source information that no single imaging AI can yet synthesize. And in the developing world, AI diagnostics are extending radiological capability to places that never had sufficient radiologists to begin with which is genuinely expanding the overall addressable scope of the profession.

In software development, tools like GitHub Copilot are writing a meaningful percentage of routine code. A 2022 GitHub study (Productivity Assessment of Neural Code Completion, Ziegler et al.) found Copilot responsible for roughly 40% of code in files where it was enabled among surveyed developers. And yet the demand for software developers hasn’t collapsed (at least not to the levels that is being depicted) and more so it’s growing because cheaper code production means more software gets built, more products get attempted, more digital infrastructure gets created. Stack Overflow’s developer surveys have consistently shown that AI tools have increased developer productivity without corresponding employment declines.

In creative fields i.e writing, design, music, the disruption is real and the anxiety most acute. But here too, a more nuanced picture is emerging. AI can generate competent content at scale. It cannot yet generate cultural meaning, editorial judgment, or the kind of human perspective that audiences seek in long-form journalism, literary fiction, or distinctive artistic voice. What’s collapsing is the market for generic, commodity creative work. What’s persisting, and in some dimensions growing, is the premium on genuine voice, expertise, and creative originality.

The Transition Problem Is Real, Even If the Endpoint Isn't Catastrophic

The question was never whether AI changes work. Everything changes work. The question is whether you're the generation that adapts or the generation that spends ten years insisting it can't be done.

I want to be careful not to make this too comfortable, because there’s something important that the optimistic historical narrative can elide: transitions are hard, painful, and unevenly distributed.

When the textile mills transformed in the 19th century, the workers whose lives were disrupted didn’t live long enough to see the net employment gains. When manufacturing automation hollowed out the American Midwest in the 1980s and 1990s, the aggregate employment statistics looked fine nationally while specific communities were devastated in ways that persisted for decades. The McKinsey Global Institute's work on job transitions (Jobs Lost, Jobs Gained: Workforce Transitions in a Time of Automation, December 2017) consistently found that the people displaced by automation are rarely the same people who fill the new jobs it creates — there are geographic, educational, and age barriers that market forces alone don't solve.

So the honest version of the argument is: the net outcome across a full economic cycle is likely to look like every previous technology transition - - more jobs, different jobs, restructured jobs, with expanding overall economic activity. But the distribution of pain during the transition is a serious policy problem that optimism about the endpoint doesn’t excuse us from addressing.

What history shows is that societies which invested in retraining, education, and transition support - - think of the GI Bill, Germany's apprenticeship model, or Singapore's SkillsFuture program that managed these transitions significantly better than those that left workers to navigate them alone.

The Specific Thing AI Cannot Do

There’s a deeper point underneath all the economic data. Every technology wave has automated what was, at that moment, the most legible and codifiable part of human work. The loom automated repetitive weaving patterns. The spreadsheet automated arithmetic operations. AI, in its current form, is automating pattern matching across language and images extraordinarily well.

What it cannot yet do and what the research literature on large language models consistently flags is genuine causal reasoning from first principles, robust physical-world interaction, deep contextual judgment in novel situations without precedent in training data, and the social and emotional labor that constitutes a surprisingly large fraction of most jobs. MIT economist David Autor's decades of research, most accessibly summarized in "Work of the Past, Work of the Future" (AEA Papers and Proceedings, 2019) — has consistently found that what's most resistant to automation is not raw intelligence but situational adaptability: the ability to handle the unexpected, the interpersonal, the physically embodied.

Human beings, it turns out, are extraordinarily good at exactly those things. Not because we're smarter than AI at pattern matching (we aren't), but because we are constitutively different embedded in physical reality, in social networks, in time, in bodies and in ways that make our intelligence complementary to AI rather than simply redundant.

A Final Note on the Human Habit of Catastrophizing

History isn't a guarantee. But two hundred years of being wrong about this in the same direction is at least worth acknowledging. There’s one more thing worth sitting with: we systematically overestimate how fast technological change eliminates jobs, and systematically underestimate our capacity to adapt.

A 2013 Oxford study by Carl Benedikt Frey and Michael Osborne ("The Future of Employment: How Susceptible Are Jobs to Computerisation?") predicted that 47% of US jobs were at high risk of automation within two decades. That study was hugely influential — and hugely wrong in its timeline. A 2017 paper by Melanie Arntz, Terry Gregory, and Ulrich Zierahn ("Revisiting the Risk of Automation," Economics Letters) revised that figure down to around 9% by applying task-level analysis rather than occupation-level analysis. The difference came from recognizing that most occupations contain a wide mix of tasks, only some of which are automatable, and that humans within those occupations reorganize around the remaining tasks rather than simply disappearing.

The era of AI is an era of genuine change. The disruption is not illusory, the anxiety is not irrational, and the transition is not painless. But the idea that this represents an endpoint that this is where the story of human work finally breaks is contradicted by every comparable moment in economic history.

What we’re living through is a readjustment. Uncomfortable, fast-moving, unevenly distributed. And, if the last two hundred years of evidence mean anything at all, ultimately navigable.

The jobs of 2040 will look as alien to us as 'social media manager' looked to someone in 1995. They'll still exist. People will still complain about them.

Nice tight piece putting several difficult, relevant topics into focus.